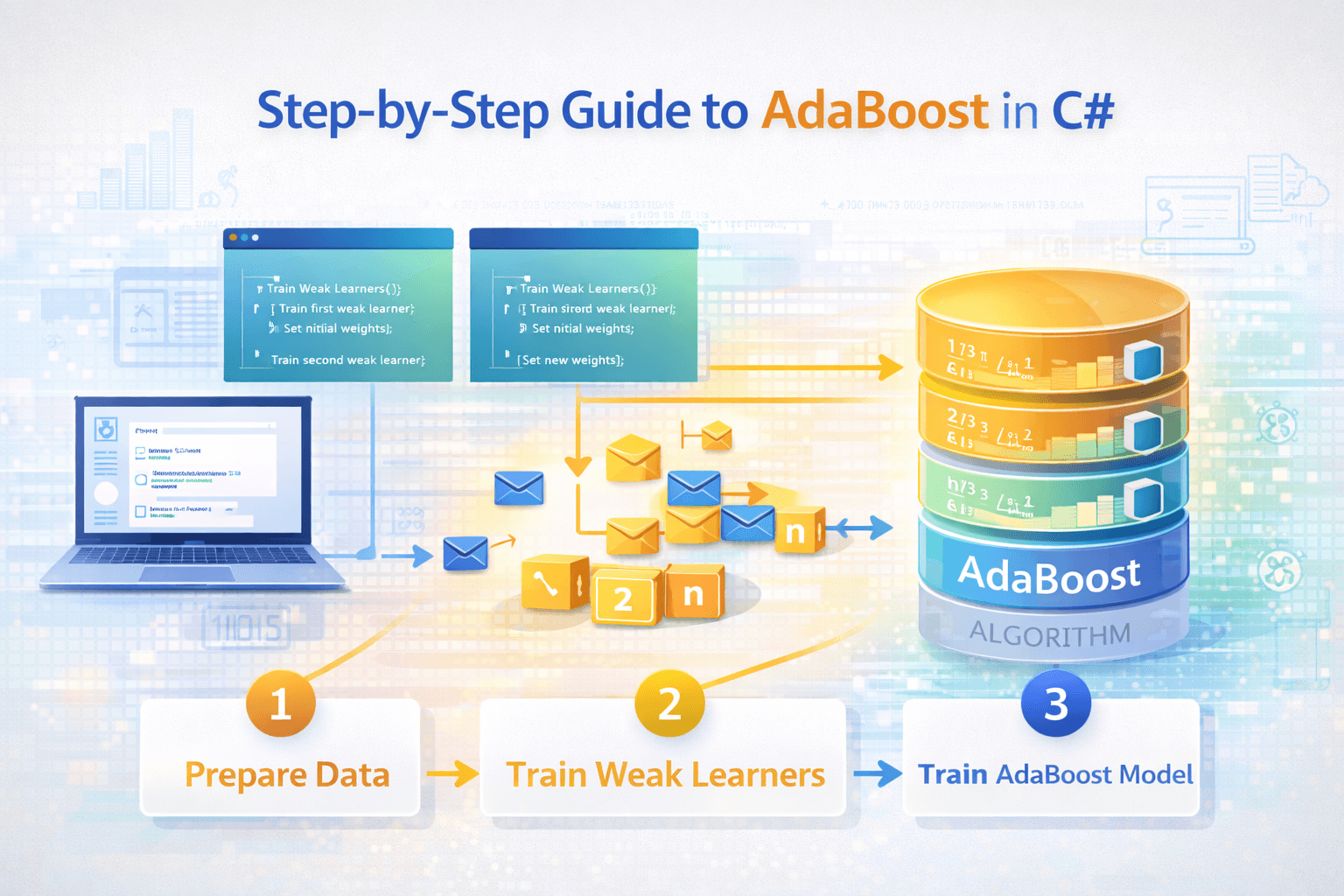

AdaBoost stands as a powerful ensemble learning algorithm. It combines weak learners into a robust classifier. This method excels in both classification and regression tasks. AdaBoost enhances model performance by focusing on misclassified data points. The algorithm assigns higher weights to these points, improving accuracy. Implementing AdaBoost Binary Classification Using C# offers a practical approach. C# provides a structured environment for developing efficient machine learning models. This guide will help you explore the potential of AdaBoost in your projects.

Understanding AdaBoost

Image Source: unsplash

Image Source: unsplash

What is AdaBoost?

AdaBoost, short for Adaptive Boosting, is an ensemble learning technique. It combines multiple weak learners to form a strong classifier. The algorithm focuses on improving the accuracy of models.

Basic principles of boosting

Boosting is a method that enhances model performance. It works by training weak learners sequentially. Each learner corrects the errors of its predecessor. The process continues until the model achieves high accuracy.

-

Sequential Training: Weak learners train one after another.

-

Error Correction: Each learner focuses on previous mistakes.

-

Improved Accuracy: The final model becomes highly accurate.

How AdaBoost improves model accuracy

AdaBoost assigns weights to each data point. Initially, all points have equal weights. AdaBoost algorithm increases the weights of misclassified points. This adjustment helps the model learn from its mistakes.

-

Initial Weight Assignment: Equal weights for all data points.

-

Weight Adjustment: Higher weights for misclassified points.

-

Iterative Learning: The model learns progressively.

Key Components of AdaBoost

Understanding the components of AdaBoost is crucial. These components work together to enhance model performance.

Weak learners

Weak learners are simple models. They perform slightly better than random guessing. Decision trees with one level often serve as weak learners. These models focus on specific data points.

-

Simple Models: Weak learners are basic classifiers.

-

Slight Improvement: Perform marginally better than chance.

-

Decision Trees: Common choice for weak learners.

Weight adjustments

Weight adjustments play a vital role in AdaBoost. The algorithm modifies the weights of data points. Misclassified points receive higher weights. This strategy ensures that the model learns effectively.

-

Weight Modification: Adjusts weights based on classification.

-

Focus on Errors: Emphasizes misclassified points.

-

Effective Learning: Helps the model improve accuracy.

AdaBoost’s unique mechanism combines the results of weak learners. The algorithm refines decisions progressively. This process reduces bias and variance, leading to a robust model.

Setting Up the Development Environment

Installing Necessary Tools

Visual Studio setup

Visual Studio serves as a powerful IDE for C# development. Download Visual Studio from the official website. Choose the version that fits your needs. Follow the installation instructions carefully. Select the workloads related to .NET development. This setup ensures a smooth coding experience.

Installing Accord.NET library

The Accord.NET library provides essential tools for machine learning in C#. Open Visual Studio and navigate to the NuGet Package Manager. Search for Accord.MachineLearning. Click on the package and select “Install”. This library offers a framework to implement AdaBoost efficiently.

Configuring the Environment

Project setup in C#

Begin by creating a new project in Visual Studio. Select a Console App (.NET Core) for simplicity. Name the project appropriately. This setup forms the foundation for your AdaBoost implementation. Ensure all settings align with your project requirements.

Importing necessary libraries

Open the Program.cs file in your project. Add the necessary using directives at the top:

using Accord.MachineLearning;

using Accord.MachineLearning.Boosting;These imports allow access to the AdaBoost functionalities. Ensure these libraries are correctly referenced. This step completes the environment configuration for your AdaBoost model.

AdaBoost Binary Classification Using C#

Initializing the Model

Creating the AdaBoost classifier

Start by creating an AdaBoost classifier in C#. Use the Accord.NET library to simplify this process. The library provides a straightforward way to implement AdaBoost.

int nLearners = 100;

AdaBoost<DecisionStump> adaBoost = new AdaBoost<DecisionStump>(nLearners);The code initializes an AdaBoost model with 100 weak learners. Decision stumps serve as the weak learners. This setup forms the basis for your binary classification task.

Setting parameters

Set parameters to control the AdaBoost model’s behavior. Adjust the number of learners or the learning rate to optimize performance.

adaBoost.NumberOfIterations = 50;

adaBoost.LearningRate = 0.1;These settings define how the model learns. A higher number of iterations may improve accuracy. A lower learning rate can prevent overfitting.

Training the Model

Preparing the dataset

Prepare a dataset for training. Use a binary classification dataset for this purpose. Ensure the data is clean and formatted correctly.

double[][] inputs = // Your input data here

int[] outputs = // Your output labels hereThe dataset should include input features and corresponding labels. Proper preparation ensures effective training.

Training process with code snippets

Train the AdaBoost model using the prepared dataset. The training process involves fitting the model to the data.

adaBoost.Learn(inputs, outputs);The Learn method trains the model. The process focuses on misclassified data points. This approach enhances the model’s accuracy over time.

Evaluating the Model

Performance metrics

Evaluate the AdaBoost model using performance metrics. Metrics like accuracy and precision help assess the model’s effectiveness.

double accuracy = adaBoost.DecisionFunction(inputs).Accuracy(outputs);

Console.WriteLine("Model Accuracy: " + accuracy);The code calculates the model’s accuracy. High accuracy indicates effective learning.

Analyzing results

Analyze the results to understand the model’s strengths and weaknesses. Consider factors like sensitivity to noise and outliers.

-

Sensitivity: AdaBoost may struggle with noisy data.

-

Efficiency: The model is computationally efficient.

-

Overfitting: AdaBoost is less prone to overfitting compared to other models.

Understanding these aspects helps refine the model further. AdaBoost Binary Classification Using C# offers a powerful tool for tackling classification tasks.

Optimizing the AdaBoost Model

Tuning Parameters

Adjusting learning rate

Adjusting the learning rate can significantly impact the performance of your AdaBoost model. A smaller learning rate slows down the learning process. This helps in achieving a more precise model. You can set the learning rate in C# using the Accord.NET library:

adaBoost.LearningRate = 0.1;A lower learning rate may prevent overfitting by allowing the model to learn more gradually. Experiment with different values to find the optimal setting for your dataset.

Number of iterations

The number of iterations determines how many times the model will train on the data. More iterations can lead to better accuracy. However, too many iterations may cause overfitting. Set the number of iterations like this:

adaBoost.NumberOfIterations = 50;Monitoring the model’s performance during training helps in deciding the right number of iterations. Stop the training if the model starts to overfit.

Handling Overfitting

Techniques to prevent overfitting

Overfitting occurs when a model learns the training data too well. This reduces its ability to generalize to new data. Use techniques like early stopping and regularization to prevent overfitting. Early stopping involves halting the training process once the model’s performance on a validation set starts to degrade.

Regularization introduces a penalty for larger weights in the model. This keeps the model simpler and less prone to overfitting.

Cross-validation methods

Cross-validation is a powerful method to evaluate the model’s performance. It involves splitting the dataset into multiple parts. Train the model on some parts and validate it on others. This ensures that the model performs well on unseen data. Implement cross-validation in C# using the following approach:

var cv = new CrossValidation<DecisionStump>()

{

Learner = (p) => new AdaBoost<DecisionStump>(nLearners),

Loss = (actual, expected, weight) => actual == expected ? 0 : 1,

Fitting = (teacher, x, y, w) => teacher.Learn(x, y, w)

};

var result = cv.Learn(inputs, outputs);

Console.WriteLine("Cross-validated accuracy: " + result.Training.Mean);Cross-validation provides a robust way to assess the model’s accuracy. This method helps in fine-tuning the AdaBoost Binary Classification Using C#.

Applications of AdaBoost

AdaBoost offers a versatile approach to solving complex problems. The algorithm finds application in various fields. Each use case highlights the strengths of AdaBoost.

Real-World Use Cases

Image recognition

Image recognition benefits significantly from AdaBoost. The algorithm handles noisy datasets effectively. This capability improves predictions on challenging cases. AdaBoost focuses on misclassified images during training. The model learns to recognize patterns more accurately. Decision stumps often serve as weak learners. These learners enhance the model’s ability to classify images correctly.

-

Handling Noise: AdaBoost manages noisy datasets efficiently.

-

Improved Predictions: AdaBoost algorithm excels in difficult cases.

-

Pattern Recognition: The model identifies patterns with precision.

Fraud detection

Fraud detection systems rely on AdaBoost for robust performance. The algorithm addresses noisy and imbalanced datasets. AdaBoost reduces bias and variance compared to single models. This reduction enhances the accuracy of fraud detection. The model adapts to new fraudulent patterns quickly. AdaBoost’s iterative learning process ensures continuous improvement.

-

Robust Performance: AdaBoost handles noisy data well.

-

Bias Reduction: The algorithm minimizes bias and variance.

-

Adaptability: The model adapts to new patterns swiftly.

Advantages and Limitations

Benefits of using AdaBoost

AdaBoost offers several advantages in machine learning. The algorithm combines multiple weak learners into a strong classifier. This combination improves model accuracy significantly. AdaBoost is less prone to overfitting than other models. The algorithm adapts to different datasets with ease. Users find AdaBoost effective for binary classification tasks.

-

Improved Accuracy: AdaBoost enhances model precision.

-

Reduced Overfitting: The algorithm minimizes overfitting risks.

-

Versatility: AdaBoost adapts to various datasets.

Potential challenges

Despite its benefits, AdaBoost faces certain challenges. The algorithm may struggle with extremely noisy data. Users need to carefully tune parameters for optimal performance. AdaBoost requires sufficient computational resources. The model’s complexity increases with more iterations. Users must balance accuracy and computational efficiency.

-

Noise Sensitivity: AdaBoost may struggle with excessive noise.

-

Parameter Tuning: Users need to adjust settings carefully.

-

Resource Requirements: The algorithm demands computational power.

AdaBoost Binary Classification Using C# provides a powerful tool for tackling diverse applications. The algorithm’s adaptability and accuracy make it a popular choice in machine learning.

AdaBoost stands as a powerful tool in machine learning. The algorithm enhances model performance by focusing on misclassified data points. You can experiment with AdaBoost in C# to explore its potential. Adjusting parameters offers opportunities for optimization. AdaBoost proves effective in binary classification tasks. The algorithm’s ability to handle noisy datasets makes it robust. AdaBoost reduces bias and variance, creating strong models. Embrace the power of AdaBoost to tackle complex problems in various domains.